Research Interests

|

3-D shape from motion |  |

|

||

|

|

|

3-D shape from motion |  |

|

||

|

|

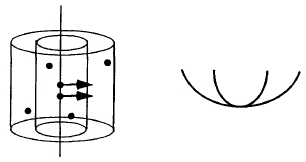

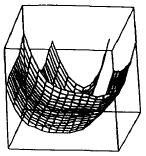

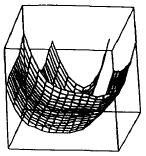

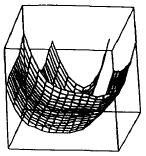

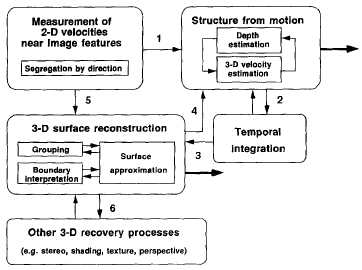

From the two-dimensional motion of image features, the visual system creates a vivid

impression of the three-dimensional shape of moving surfaces. This 3-D percept is not

instantaneous, but appears to emerge over an extended time through incremental changes.

As the computed 3-D structure evolves, the visual system also constructs a representation

of a continuous surface, even when moving features are sparse. These papers present

the results of perceptual experiments of the recovery of 3-D structure from motion, and a model

that combines the incremental recovery of 3-D shape with a surface reconstruction process:

impression of the three-dimensional shape of moving surfaces. This 3-D percept is not

instantaneous, but appears to emerge over an extended time through incremental changes.

As the computed 3-D structure evolves, the visual system also constructs a representation

of a continuous surface, even when moving features are sparse. These papers present

the results of perceptual experiments of the recovery of 3-D structure from motion, and a model

that combines the incremental recovery of 3-D shape with a surface reconstruction process:

Hildreth, E.C., Grzywacz, N.M., Adelson, E.H. & Inada, V.K. (1990), The perceptual buildup of 3-D structure from motion, Perception and Psychophysics 48, 19-36.

Hildreth, E.C., Ando, H., Treue, S. & Andersen, R.A. (1995) Recovering three-dimensional structure from motion with surface reconstruction, Vision Research 35, 117-137.

Treue, S., Andersen, R.A., Ando, H. & Hildreth (1995) Structure from motion: Perceptual exidence for surface interpolation, Vision Research 35, 139-148.

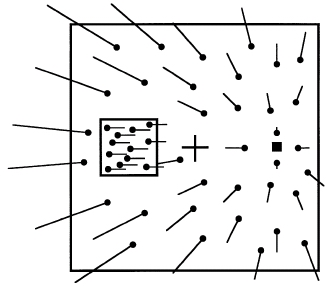

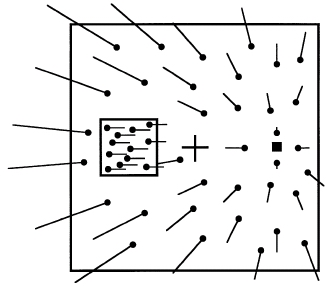

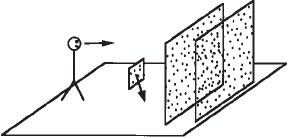

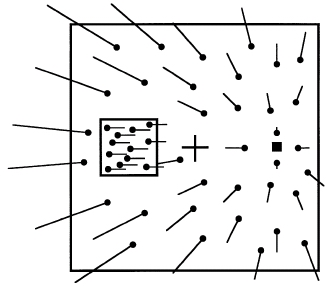

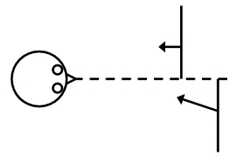

As we move through the world, the pattern of 2-D motion in our visual image also provides a strong cue to our 3-D direction of motion, or heading. The accurate computation of heading is especially challenging when the visual scene contains independently moving objects. These papers present a computational model and perceptual observations of the recovery of heading relative to a scene that contains moving objects, and also explore the relative attentional demands of our ability to judge heading compared to the demands of judging the 3-D trajectory of a moving object:

Hildreth, E.C. (1992) Recovering heading for visually-guided navigation, Vision Research 32, 1177-1192.

Hildreth, E.C. & Royden, C.S. (1996) Human heading judgments in the presence of moving objects, Perception and Psychophysics 58, 836-856.

Hildreth, E.C. & Royden, C.S. (1999) Differential effects of shared attention on perception of heading and 3D object motion, Perception and Psychophysics 61, 120-133.

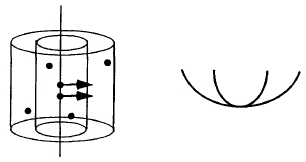

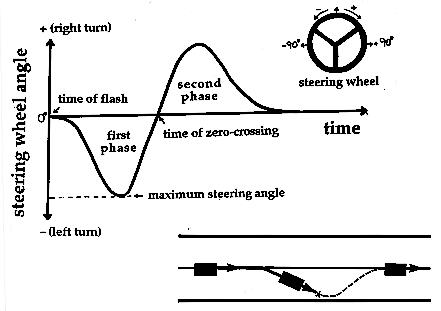

When driving in natural conditions, our attention is frequently drawn away from the

steering task for short periods of time, often coupled with a reduction of visual input

in the direction ahead of the vehicle. Experienced drivers can still perform accurate steering

maneuvers in the absence of continuous visual input. We conducted experiments in a driving

simulator to explore the visual cues that are used to guide steering during times of brief

visual occlusion. When making lane corrections, drivers increase steering amplitude with

larger heading deviations and perform corrections more rapidly at higher speeds, with or

without continuous visual feedback. We developed two models of steering control to

account for these findings. This paper describes the experimental work and steering

models:

steering task for short periods of time, often coupled with a reduction of visual input

in the direction ahead of the vehicle. Experienced drivers can still perform accurate steering

maneuvers in the absence of continuous visual input. We conducted experiments in a driving

simulator to explore the visual cues that are used to guide steering during times of brief

visual occlusion. When making lane corrections, drivers increase steering amplitude with

larger heading deviations and perform corrections more rapidly at higher speeds, with or

without continuous visual feedback. We developed two models of steering control to

account for these findings. This paper describes the experimental work and steering

models:

Hildreth, E.C., Beusmans, J., Boer, E.R. & Royden, C.S. (2000) From vision to action: Experiments and models of steering control during driving, Journal of Experimental Psychology: Human Perception and Performance 26, 1106-1132.

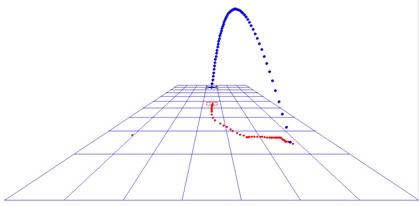

To catch a fly ball, what visual cues do we use to judge its 3-D trajectory? In her

To catch a fly ball, what visual cues do we use to judge its 3-D trajectory? In her

thesis work, Oly Fernando explored how performance of a virtual ball-catching task

depended on factors such as the ball's rate of change of image size, absolute size, presence

of a background reference grid, and portion of the flight trajectory observed. Results

suggest that both static and dynamic size cues are important for judging the movement of the

object in depth.

Subjects appeared to use different strategies to perform the task. Some clearly predicted in

advance, where the ball will land, and quickly moved their cursor to the landing site, while

others appeared to track the projection of the ball along the ground plane.

thesis work, Oly Fernando explored how performance of a virtual ball-catching task

depended on factors such as the ball's rate of change of image size, absolute size, presence

of a background reference grid, and portion of the flight trajectory observed. Results

suggest that both static and dynamic size cues are important for judging the movement of the

object in depth.

Subjects appeared to use different strategies to perform the task. Some clearly predicted in

advance, where the ball will land, and quickly moved their cursor to the landing site, while

others appeared to track the projection of the ball along the ground plane.

Fernando, R.B. (2008) Catching balls: What visual cues are used to judge the 3-D trajectory of a moving object?, Neuroscience Honors Thesis, Wellesley College.

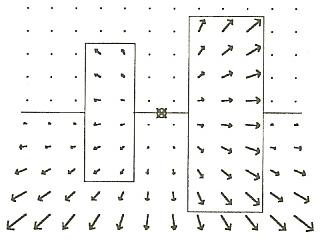

As we move through a complex, dynamic scene, we quickly perceive distinct objects that

we can recognize, track or intercept. A critical step in this process is to locate

object boundaries in the rapidly changing visual image, and to determine the relative

depths between surfaces meeting at a boundary.

As we move through a complex, dynamic scene, we quickly perceive distinct objects that

we can recognize, track or intercept. A critical step in this process is to locate

object boundaries in the rapidly changing visual image, and to determine the relative

depths between surfaces meeting at a boundary.

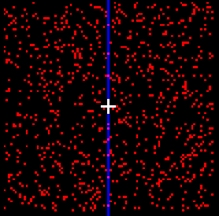

In collaboration with

Dr. Constance Royden at Holy Cross College, I conducted perceptual experiments that

examine how stereo and motion cues are used to analyze the depth order of surfaces at

object boundaries. These experiments used three cues to convey relative depth: a change

in the speed of image motion across a surface boundary (motion parallax), the disappearance

of features on a surface moving behind an occluding object (motion occlusion), or a

difference in the stereo disparity of adjacent surfaces. We compared perceived depth

order for different combinations of these cues, incorporating conditions with conflicting

depth order and conditions with varying reliability of the individual cues. We observed

In collaboration with

Dr. Constance Royden at Holy Cross College, I conducted perceptual experiments that

examine how stereo and motion cues are used to analyze the depth order of surfaces at

object boundaries. These experiments used three cues to convey relative depth: a change

in the speed of image motion across a surface boundary (motion parallax), the disappearance

of features on a surface moving behind an occluding object (motion occlusion), or a

difference in the stereo disparity of adjacent surfaces. We compared perceived depth

order for different combinations of these cues, incorporating conditions with conflicting

depth order and conditions with varying reliability of the individual cues. We observed

large differences in performance between subjects, ranging from those whose depth order

judgments were driven largely by the stereo disparity cue to those whose judgments were

dominated by motion occlusion. The relative strength of these cues influences individual

subjects' behavior in conditions of cue conflict and reduced reliability.

large differences in performance between subjects, ranging from those whose depth order

judgments were driven largely by the stereo disparity cue to those whose judgments were

dominated by motion occlusion. The relative strength of these cues influences individual

subjects' behavior in conditions of cue conflict and reduced reliability.

Hildreth, E.C. & Royden, C.S. (2011) Integrating multiple cues to depth order at object boundaries, Attention, Perception and Psychophysics, vol. 73(7), 2218-2235.

Created by Ellen Hildreth || Last modified August, 2013