Roost Compiler Front End

- Assign: Tuesday, 19 February

- Checkpoint: Parsing complete, AST progress by Friday, 1 March

- Checkpoint: AST complete, Building Scopes and Resolving Names progress by Friday, 8 March

- Checkpoint: Building Scopes and Resolving Names complete, Type Checker progress by Friday, 15 March

- Checkpoint: Type Checker complete before Spring Break, Wednesday, but shows on calendar Tuesday, 19 March

- Due: Before spring break, Wednesday, but shows on calendar Tuesday, 19 March

- Reference:

Contents

Plan

In this project stage, you will implement the syntax analysis and semantic analysis components (the “front end”) of the Roost compiler. You will write the parser, the AST, the scope builder/name resolver, and the type checker. This is the longest project stage for the semester, due before Spring Break. There are weekly checkpoints and intermediate deadlines to ensure continued forward progress.

All parts of the Roost Compiler Requirements and Coding Guide apply to this project stage, including:

- General requirements for compiler output

- Programming support (including provided code) that will be useful in this (and other) stages

- Testing support

- Documentation and style guidelines

- Submission and evaluation information

- and more!

Package Structure

Support for the Front End components of the compiler should be implemented in the following packages:

roost.lex: lexer definitionroost.parse: parser definitionroost.ast: AST definitionroost.scope: scope, symbol table, and name buildingroost.typecheck: type checking

Language Feature Levels

The Roost Language Specification defines a core language and three standard extensions: parametric (aka generic) types; structure methods and signatures; first-class function closures. Think of the implementation as preceding in levels within each project stage:

- (AS) Tree Branch: core language.

- Generic Nest: core language, plus one of generic types or structure methods/signatures.

- Polymorphic Birdhouse: core language, plus both generic types and structure methods/signatures.

- Roosting on Clouds: core language, plus all three standard extensions: generic types, structure methods/signatures, and function closures.

As you work on each new compiler component or feature, you should generally implement these language feature levels in order. For example, make sure level 1 is fully working before starting level 2; 2 before 3; 3 before 4. The difficulty level of each feature will vary across each project stage; they are arranged here with the overall project in mind.

At each stage you may elect any level to complete.

Testing the Front End

The importance of testing in this stage (and all following stages) cannot be understated. Extensive testing will be crucial to help detect subtle incorrect behavior in your Roost compiler and fix it. Compiler bugs are the most pernicious of all bugs, so find them early and stamp them out! As with all stages, you should develop a set of several dozen test inputs to the compiler to test each compiler feature you add in the frontend.

Note that many tests that should be accepted by your lexer should be rejected at this stage (if they are not well-formed Roost programs). The same will be true of existing tests with each new checking feature you add in the frontend. If you continue to use the provided testing script, organize your new tests as follows:

src/test/roost/:parse: tests for parsing and AST buildingcore: tests for the core languageaccept: test programs that the parser should acceptreject: test programs that the parser should reject

typevar: tests for the parametric type system (generic types)accept,reject

sig: tests for structure methods and signaturesaccept,reject

closure: tests for first-class function closuresaccept,reject

scope: tests for scope building, name resolution, and checking- Same structure. All

parsetests are reusable here, but scope checking should reject some programs that parsing accepts.

- Same structure. All

typecheck: tests for type checking- Same structure. All

scopetests are reusable here, but type checking should reject some programs that scope checking accepts.

- Same structure. All

Organizing by compiler feature and language extension will make it

easier to track what tests are relevant at what stages of your

implementation. The provided testing

script allows running specific

sets of tests in this hierarchy. For example, test-roostc-status.py

parse/* runs all parse tests for use during parser development;

test-roostc-status.py scope/core scope/typevar runs only the scope

tests for the core language plus the typevar extension for use

during that stage.

Schedule

Submission Steps

Please see the updated submission steps in the Roost Compiler Requirements and Coding Guide.

The Front End project stage includes several intermediate checkpoints when individual features are due:

- Due 1 March 2019:

-

Parsing complete: Your compiler must accept syntactically valid Roost programs and reject syntactically invalid Roost programs with a syntax error. Your

README.mdmust contain a brief section on design of the parser specification, including any non-obvious elements in the grammar, and an indication of which language level you support. -

AST progress: Your

README.mdmust give an initial design of your AST package, including a brief overview of the type hierarchy of AST node types. The AST design need not be very detailed, but it must at least demonstrate that you have begun to think about how to organize ASTs. Ideally, AST implementation is underway before this date, but no submission of AST code is required. -

Submit using the

parserbranch.

-

-

Due 8 March 2019: All of the above, plus:

-

AST construction complete: Your compiler must generate ASTs for syntactically valid Roost programs and support the

--show-astcommand-line option. YourREADME.mdmust contain a finalized overview of your AST design and an indication of which language level you support. -

Scope progress: Your

README.mdshould contain an initial design of the symbol table structure and the scope-building process. Ideally, symbol table implementation is underway before this date, but no submission of symbol table code is required. -

Submit using the

astbranch.

-

-

Due 15 March 2019: All of the above, plus:

-

Building scopes and resolving names complete: Your compiler must build symbol tables for all scopes and resolve all name references that do not depend on type checking. Your compiler must support the

--show-scopescommand-line option. YourREAMDE.mdmust contain a finalized overview of your symbol table and name resolution design and an indication of which language level you support. -

Type checker progress: Your

README.mdmust contain an initial design of your type checker. Ideally, type checker implementation is underway before this date, but no submission of type checker code is required. -

Submit using the

scopebranch.

-

-

Due 20 March 2019:

-

Full front end complete:, including all of the above plus the type checker, the

--show-typescommand-line option, and all documentation of all parts inREADME.md, with an indication of which language level you support. -

Submit using the

typecheckbranch.

-

Get Ahead

Treat the above schedule as a starting point, but aim to get ahead of this schedule. Scope and type checking will be more involved than the earlier parts, especially if you implement 2 or more of the standard extensions.

Parsing

To generate the parser, you will use Java CUP, an LALR(1)

parser generator for Java, included in provided code. LALR(1) parsers

are essentially LR(1) parsers, except that they typically have a much

smaller automaton because they merge states containing the same items

when possible. All components of the parser should be implemented in

the roost.parse package.

Getting Started: Link the Lexer and Parser

In the lexer stage of the project,

you manually enumerated tokens in sym.java. In this stage that file

will be automatically generated by the Java CUP parser generator. To

link your lexer to the generated parser, follow these steps:

-

Get some provided code updates by running

git pull origin starter. Accept the default commit message about the merge. -

Edit your

Tokenclass definition to extent thejava_cup.runtime.Symbolclass:import java_cup.runtime.Symbol class Token(id: Int, line: Int, col: Int, value: Object) extends Symbol(id, line, col, value) // That's all!Alternatively, replace your uses of

Tokenwith uses ofjava_cup.runtime.Symbol. -

Edit the Terminals section of

roost.cupto declare each of the tokens from theroost/lex/sym.javaenumeration you wrote for the lexer as aterminalinroost.cup. -

Edit the start of the

roost.flexfile to look like this:package roost.lex; import roost.error.*; import roost.parse.sym; import java_cup.runtime.Symbol; %% %cup %class Lexer %public %function next_token %type Token %line %column %eofval{ return new Token(sym.EOF, yyline+1, yycolumn+1, null); %eofval} /* Your parts follow here... */Your lexer will now use a different

sym.javathat will be auto-generated by CUP. -

Change any code using your

Lexer’snextmethod to usenext_tokeninstead. -

Run the Lexer/Parser Generators task in IntelliJ and make sure that the lexer and (still mostly empty) parser both build successfully.

-

Remove the old

sym.javafile to avoid confusion:git rm src/main/scala/roost/lex/sym.java -

In the

compilemethod ofCompiler.scala, edit your code such that it constructs aLexerand performs the lexical analysis steps directly if only if the--show-tokensoption is enabled. Then add the code shown at the bottom to run your parser on the source file. Overall, this should look like:// No Lexer code outside this `if`... if (config.showTokens) { // Initialize a lexer for the source file. val lex: Lexer = new Lexer(new BufferedReader(new FileReader(config.sourceFile))) // Your code for consuming and showing the lexical tokens is here... } // FIXME: Add this code to run your parser. val programParser = new roost.parse.parser(new Lexer(new BufferedReader(new FileReader(config.sourceFile)))) val parseResult = programParser.parse().value -

git add,git commit, andgit pushthis set of changes.

For more details on how to integrate your parser with your lexer, you may wish to read Working Together section of the JFlex documentation, and Section 5 (Scanner Interface) of the Java CUP documentation.

Parser Specification

Implement a Java CUP parser specification in the file

src/main/scala/roost/parse/roost.cup. Use the grammar from the

Roost Language Specification as a

starting point. Modify this grammar to make it LALR(1) and free of

conflicts when you run it through Java CUP. The operator precedence

and associativity must be as indicated in the Roost specification. You

are encouraged to use Java CUP precedence and associativity

declarations.

Resolving a Reduce-Reduce Conflict

As noted in the language specification, there is an additional

ambiguity beyond those resolved by precedence rules for Roost

operators. By example, this function should return -1, not 0. Its

body is a sequence of a let expression followed by a final unary

negation expression, not a single subtraction expression:

fn f() -> i64 {

let x = 1 in {

x

}

-1

}You will most likely encounter this (and one or two related

ambiguities) as a reduce-reduce conflict in your CUP

specification. Precedence rules are well suited to dealing with

shift-reduce conflicts, but less well-suited for this reduce-reduce

conflict. To resolve this reduce-reduce conflict in favor of a

sequence of expressions (rather than a single expression), you may use

the -expect 1 flag of CUP after reading the relevant section of the

CUP documentation and thinking carefully about the effect of this

choice. To do this, edit build.sbt and find the two references to

CUP (inside the java_cup and dump definitions). In each of these

CUPs commands, add -expect 1 just before

./src/main/scala/roost/parse/roost.cup. (You’ll need to start a new

sbt shell for the Lexer/Parser Generators task to see this update.)

Grammar Actions

Initially, use the parser only to check the syntax of source programs. In the next step, use the parser only to build the AST. Separate passes over the AST will do all semantic analysis (including building scopes, resolving names, and type checking in this stage) as well as translation to an intermediate representation (in the next stage).

Recommended Lexical Revisions

How will your lexer treat x-5? Does it produce the tokens x, -,

and 5? Excellent. That keeps things simple. Does it produce x, and

-5? Even though it would be nice to treat negative number literals

as their own entire token, it will be more convenient for parsing if

integer literals are non-negative and the - symbol is a distinct

token.

Optional Lexer/Parser Challenge

Your lexer likely treats >> and >>> as their own atomic

tokens. This is an intuitive choice. It also interacts poorly with use

of > in multiple contexts in Roost language. This situation is

unlike other similar tokens such as, = and ==, which are clearly

distinct individual tokens. The occurrence of two = symbols adjacent

to each other can mean only the single toke ==. But for the use of

>, consider these valid syntactic forms: x >> 2 and

List<Stack<Turtle>>. Assuming your lexer defines >> as a token,

the first form is tokenized correctly (x, >>, 2), but the second

form is tokenized in a way that makes parsing it awkward: List, <,

Stack, <, Turtle, >>. To avoid this pitfall, we can simply

rewrite type parameterizations with a space if needed to disambiguate:

List<Stack<Turtle> > will tokenize well: List, <, Stack, <,

Turtle, >, >. Yet requiring ad hoc spacing is not an elegant

solution and does not match the Roost syntax definition. We could

alter the syntax, perhaps to use <: and :> for type

parameterizations, but this also feels like a hack.

There exist a number of potential workarounds for this issue that

accept List<Stack<Turtle>> without requiring spaces or making other

adjustments to the syntax. The best workaround also ensures that > >

is not treated as a shift operator, just >>. Your challenge, if you

choose to accept it, it to adjust your lexical and/or parsing

specifications to ensure that both left shift operators (e.g., >>)

and nested parameterized types (e.g., List<Stack<Turtle>>) are

parsed correctly, while retaining the most elegant and principled

approach you can find. Save this for last, only if you have spare

time. Our tests will politely use spacing to avoid encountering this

issue.

Syntax Errors

Your parser must detect and report the first syntax error it

encounters during parsing, if any. You are free to explore some of the

techniques we considered for error-correcting or providing information

about more than one syntax error, but this is not required. When

encountering a syntax error, your parser should raise a SyntaxError

exception carrying an informative message about the

error (or errors if you

choose to go further). The top-level compiler pipeline will catch

this exception as a CompilerError, print its

message, and terminate

with appropriate status. The exact

message is up to you, but it is highly recommended that you include a

line and column number of a position in the input program source code

where the error arises. This will help you test and debug your

compiler efficiently.

Debugging Your Grammar

While debugging you parser, you may find it useful to examine the

automaton and parse table that CUP produces from your

specification. This can be useful to understand ambiguity or

precedence problems, for example. To view the automaton and parser

table, run dump in the sbt shell. You can also add this sbt task

as a run configuration in IntellIJ like you did for the normal

lexer/parser generators. Or you can

run the following command in the top-level project directory to capture the output to a file:

java -jar lib/java-cup-11b.jar -destdir src/main/scala/roost/parse -dump src/main/scala/roost/parse/roost.cup 2>&1 > cup-dump.txt

Fair warning, the output can be large enough to surpass the scrollback of your terminal window. For my grammar of the complete Roost language (core plus all standard extensions), CUP produces over 200 LALR states and using the full automaton/table dump generates over 6500 lines of output. It is, however, well organized and searchable based on the CUP error messages about conflicts.

Abstract Syntax Trees

Design a hierarchy of Scala case

classes for the

various types of nodes in abstract syntax trees (ASTs) for the Roost

language. When the input program is syntactically correct, your parser

should produce a corresponding AST for the program. The abstract

syntax tree is the interface between the syntax and semantic analysis,

so designing it carefully is important for the subsequent stages in

the compiler. Note that your AST classes will not necessarily

correspond to the non-terminals of the Roost grammar. Use the grammar

from the language specification only as a rough guideline for

designing the AST. The AST should be defined in the roost.ast package.

Using Scala’s case classes will support pattern-matching over the AST data structure, which is a massive benefit in programming the remaining passes that inspect the AST. I advise defining all these classes (They’re usually tiny) in a single shared file.

AST Construction with Grammar Actions

Once you have designed the AST hierarchy, extend your parser specification to construct AST nodes in its actions.

CUP reads in the roost.cup file and generates parser.java and

sym.java. Actions for grammar productions in the roost.cup must be

written in Java, but your AST nodes and other supporting code will be

written in Scala. Creating a Scala object from Java code is typically

routine — just use new as usual. However, creating useful

standard Scala types like Seq and Option can be trickier since

there is no analog to those concepts in Java. The provided

roost.parse.ParseUtil (ParseUtil.scala) contains some small helper

methods to construct Seq and Option values. These methods can be

invoked from your Java action code to create and manipulate Seq and

Option values in the actions in your roost.cup specification. For

example: ParseUtil.cons(e, blockSubExprs). Feel free to add any

other methods here if they are helpful in bridging the CUP

specification and your Scala code. After finishing AST construction,

we will be done with the Java/Scala gap and will work solely in Scala

for the remainder of the project.

Show ASTs

When given the --show-ast command line option, your compiler must

print a human-readable text representation of the AST data structure

that your parser has generated. To recognize the --show-ast option

from the command line arguments in main and record its value in the

compiler Config, use the code that handles the --show-tokens

command line option in main as an example. Refer to the scopt

documentation for additional

examples.

The exact text format of your output is up to you, but it must show

all structural details of the AST. It need not include line/column

numbers. Similar to the lexer’s --show-tokens output,

the --show-ast output should be prefixed by # Start AST and # End

AST.

One option that can be useful is to create an AST pretty-printer

that prints out your AST using Roost syntax. You may also find it

useful to add another option that saves the pretty-printed AST to a

given .roost file. A decent extra test of your parser and AST

construction is to parse an original.roost file, pretty-print its

AST to a pretty.roost file, then parse that pretty.roost file and

pretty print it as pretty-pretty.roost. pretty.roost and

pretty-pretty.roost should be identical!

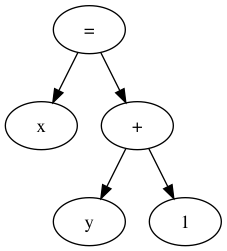

Visualizing ASTs with DOT (Optional but Recommended)

You may find it helpful to use the graph visualization tools in the

GraphViz suite of tools for printing out

information about the AST. The most useful tool is dot, which reads

a textual specification for a graph and outputs an image (PDF, PNG,

JPEG, etc.). For instance, the dot specification and output below

describe the AST of the expression x = y + 1. After generating such

a .dot file in dot-example.dot, run dot -Tpdf

< foo.dot > foo.pdf to create a PDF visualization in

dot-example.png.

dot

output.// Example dot input.

digraph G {

expr[label="="];

lhs [label="x"];

rhs [label="+"];

leftop [label="y"];

rightop [label="1"];

expr -> lhs;

expr -> rhs;

rhs -> leftop;

rhs -> rightop;

}

Using dot is highly recommended but not required. If you decide to use

it, I recommend creating a --dot-ast command line option that

enables dot generation for the AST and accepts a name of a .dot file

where it is to dump the source code. For example: roostc --dot-ast

a.dot program.roost. See the scopt

documentation for reference on

various kinds of command line option types.

Building Scopes and Resolving Names

Design a symbol table data structure to represent scope information

and any additional structures you wish to use for representing program

types. Your design should allow each AST node to access the symbol

table for its current scope (block, function, method, structure, or

signature, depending on which extensions to the core language you

implement) and should relate nested scopes appropriately. Support for

scopes, symbol tables, and name resolution should be implemented in

the roost.scope package.

Your constructed symbol tables should be available to all remaining compilation stages. I recommend that all subsequent stages refer to program symbols (e.g., variables, methods, class names, etc.) using references to their symbol table entries, and not using their string names. There are many ways to represent this name resolution reference. Perhaps the most straightforward is to create the symbol table structure as a mapping from each string name in scope to the AST node corresponding to the declaration of that name. Declaration AST nodes naturally carry all necessary metadata for using their declared name, such as type, etc. Given this recommended structure, you can simply augment each AST node that uses a name to carry a second reference to the AST node for that name’s declaration, and fill that reference later.

For example, if you have a VarDecl class to represent a local

variable declaration and a Var class to represent a use of a

variable in an expression, then Var should have an instance variable

decl of type Option[VarDecl] that initially holds None when the

AST is constructed, but is later filled by name resolution. This

approach, along with alternatives, is described briefly in EC 5.7.

Stages of Name Resolution

Some uses of names can be resolved unambiguously without type information and should be resolved at this stage:

- variable/parameter/function names

- structure type names

- signature type names

- type variable names

self

Resolution of references to field and method names depend on the types

of their target expressions, so their resolution must wait until type

checking. For example, the meaning of append in

e.append(x) depends on the type of expression e.

Uninitialized Variable Checks

Roost let blocks may declare variables without initializing

them. Your name resolution checker should additionally track what

variables are initialized and check that variables are used only after

they have been initialized, raising a compiler error otherwise.

Scope Errors

As with syntax errors, your scope-building and name

resolution code for this part should raise errors (likely as

exceptions using one or more newly defined subtypes of

CompilerError) that provide an informative error message about the

scope or name problem encountered in the input program. These semantic

errors should be labeled clearly to be distinguishable from other

kinds of errors.

If you have time, you may wish to use an alternative error delivery scheme that gathers errors and attempts to keep going and finish running the particular semantic check over the full program, rather than reporting only the first error. This is optional, but can greatly speed up your work as you debug your semantic checks.

Show Scopes

When given the --show-scopes command line option, your compiler must

print a text AST representation where each scope (block,

function, method, structure, signature) is annotated with symbol table

information and each resolved name reference is annotated so as to

associate it with its declaration. The format is up to you. As with

ASTs, you may also find it helpful to generate dot

output for ASTs annotated with symbol table

information. (Create a --dot-scopes option in this case.)

Design Hints

Decorating ASTs with Traits

For this part and type checking, you will need to “decorate” your AST nodes with extra information such as a symbol table reference, a resolved name-to-declaration reference, type information, etc. One possible approach is to augment classes in your AST class hierarchy with additional instance variables and methods for accessing these items. This works best if the decoration support can be added in the abstract classes within your AST hierarchy for easy reuse in subclasses.

However, it is frequently the case that there are cross-cutting AST decoration concerns that do not map cleanly to a single-inheritance hierarchy. Scala traits are quite handy for these cases: you can define decorations in traits and then “mix in” those traits wherever those decorations are needed across the AST hierarchy.

Source File Locations

To report semantic errors with information about where they appeared

in the source code, it will be necessary to attach that information to

your AST nodes. Note that, for every named symbol x in a production

(e.g., IDENTIFIER:x), CUP also introduces two variables xleft

and xright that capture the two numbers associated with the Token

or Symbol. Likely your lexer provided these as a line number (left)

and a column number (right). Even though CUP thinks they are character

offsets in the file, you can still use them however you like. Rather

than painstakingly changing all of your AST nodes to support extra

arguments, one option is to define a method for setting a source

location on an AST node. Then you can simply update each grammar

action call this method on RESULT (after constructing the relevant

AST node as it does now) with the relevant arguments taken from left

and right variables in the current production.

Separate Passes for Separate Jobs

It may be tempting to try and combine multiple semantic analysis tasks into a single pass over the AST. Don’t do it; it’s usually easier to keep them separate. This is especially relevant for symbol table building and name resolution: build the scopes and tables and decorate the AST with those. Then do a separate pass to resolve non-type-dependent names using the fully installed symbol tables.

Embrace Case Classes, Pattern Matching, and Options

Pattern matching with AST case classes is ideally suited to the style of recursive AST algorithms that will support most semantic checks in the Roost compiler. Get comfortable with Scala case classes and pattern matching and use them extensively.

Avoid the use of null in your compiler implementation at nearly any

cost. Wherever null invades, infuriating NullPointerExceptions

tend to follow. When your impulse is to use a null value to show the

lack of an actual value of type T, replace T with Option[T] to

make this possibility explicit. Use None for lack of a thing and

Some(thing) when you have one. When inspecting such a value, use

pattern matching. This helps force you to consider whether the thing

may be present or absent. It may initially feel verbose to have many

of these matches throughout your code, but there is great value in

their explicitness. (The Scala compiler will even help you remember if

you forgot to deal with one case.)

val maybeThing: Option[Thing] = ...

...

maybeThing match {

case Some(thing) => use(thing)

case None => thereIsNoThing()

}Supporting Builtin Functions

A simple way to support name resolution and type checking of Roost’s builtin functions is to add their names and types to the top-level symbol table and have symbol tables support an operation to lookup a builtin function name.

Sample Symbol Table Design

Here is a sketch of the style of symbol table design we discussed in tutorials. There are many design options.

package roost.scope

import scala.collection.mutable

import roost.ast._

import roost.IndentingPrintStream

import roost.error.Errors

object SymbolTable {

/** Definition nodes for builtins, acting much like FunDefs. */

val builtins: Map[String,BuiltinDef] = Map(

"println" ->

BuiltinDef("println", Seq(VarDef("message", Some(StrType()), None)), UnitType()),

/* ... */

)

}

class SymbolTable(owner: Scoped, val parent: Option[SymbolTable]) {

/** Map struct names to definition nodes. */

val structs: mutable.Map[String,StructDef] = mutable.Map()

/** Map struct field names to definition nodes. */

val fields: mutable.Map[String,FieldDef] = mutable.Map()

/** Map variable (including function) names to definition nodes. */

val vars: mutable.Map[String,Binding] = mutable.Map()

/** Declare various elements. */

def declare(id: String, d: StructDef): Boolean = { /* ... */ }

def declare(id: String, f: FieldDef): Boolean = { /* ... */ }

def declare(id: String, f: FunDef): Boolean = { /* ... */ }

def declare(id: String, v: VarDef): Boolean = { /* ... */ }

/** Lookup various elements. */

def lookupBuiltin(id: String): Option[BuiltinDef] = {

SymbolTable.builtins.get(id)

}

def lookupType(id: String): Option[TypeDef] = { /* ... */ }

def lookupField(id: String): Option[FieldDef] = { /* ... */ }

def lookupVar(id: String): Option[Binding] = { /* ... */ }

/* ... more added for type checking, loop checking, etc. ... */

}Type Checking

After constructing the AST and symbol tables, your compiler will

analyze the AST and perform semantic checks. These semantic checks

include type-checking (according to the type system in the Roost

Language Specifciation) and any scoping rules that could not be

enforced while building the symbol tables, plus all of the other

requirements for semantic checks described in the language

specification. Support for type checking should be implemented in the

roost.typecheck package.

Now that you are experienced building AST passes, most parts of the type checker for the core language should be fairly straightforward. If you have time, implementing one standard extension (parametric types or methods/signatures/subtyping) for the type checker adds quite a bit of interest. Implementing both is a lot.

Type Errors

As with syntax and scope errors, your type checker should raise type errors that provide an informative error message about the type error encountered in the input program. These type errors should be labeled clearly to be distinguishable from other kinds of errors.

Show Types

When given the --show-types command line option, your compiler must

print a text AST representation where each variable with

inferred type and function or method call with inferred type parameters

is annotated to show the types that have been inferred. (For example,

x would be inferred to have type i64 in let x = 4 in ...) The

format is up to you. As with ASTs and scopes, you may also find it

helpful to generate dot output for ASTs annotated with

symbol table information. (Create a --dot-types option in this

case.)

Design Hints

Type Checking of Expressions

There are two common approaches to design the type checking code for expressions. In one style you could write a type-checking function that runs in a top-down style, where a prescriptive type-checking function takes a pair of arguments – an expression to check and the type it should have – and passes a boolean judgment on whether the expression can be attributed that type.

An alternative is somewhat more bottom-up and inference-based: it takes a single argument – an expression to check – and infers the type (if any) that this expression can take based in its structure and any subexpressions. Then at the current level of type checking, this inferred type can be compared to the type required by the current context.

Each approach has its own benefits, but the latter may end up more

attractive overall. There are a handful of cases where the necessary

type of an expression is not own from context. Method calls and field

accesses fall in this category. Roost also does requires limited type

inference for variable definitions in let blocks, which may omit an

explicit type if they supply an initial expression (from which the

type can be inferred).

Interaction of Standard Extensions

As will become clear from the Roost language specification, the type system for the core language is simple. While each standard extension adds some interest on its own, the intersection of the extensions can get quite interesting! If you plan to support more than one standard extension at the type checking stage, definitely implement one feature at a time and study the type system carefully.